Gravity is a Problem

Millions of AI agents are browsing the web every day. They’re reading articles, parsing documentation, and ingesting research. But the web wasn’t built for agents. It was built for humans.

When an agent encounters web content today, it must:

Fetch the full page1

Parse all the tags and raw text

Infer intent and determine relevancy

If needed, generate semantic embeddings for later retrieval

Each agent does this independently. Every time. For every piece of content.

That’s tokens. That’s compute. That’s time spent parsing instead of reasoning.

Now scale that to millions of agents. Billions of documents. Quintillions of computational cycles (and enough boiling water to make a meeeean pot of coffee) and what do we have? A vast sea of agents that each independently discovered something the publisher knew at the moment of publication: the essential meaning of the document itself.

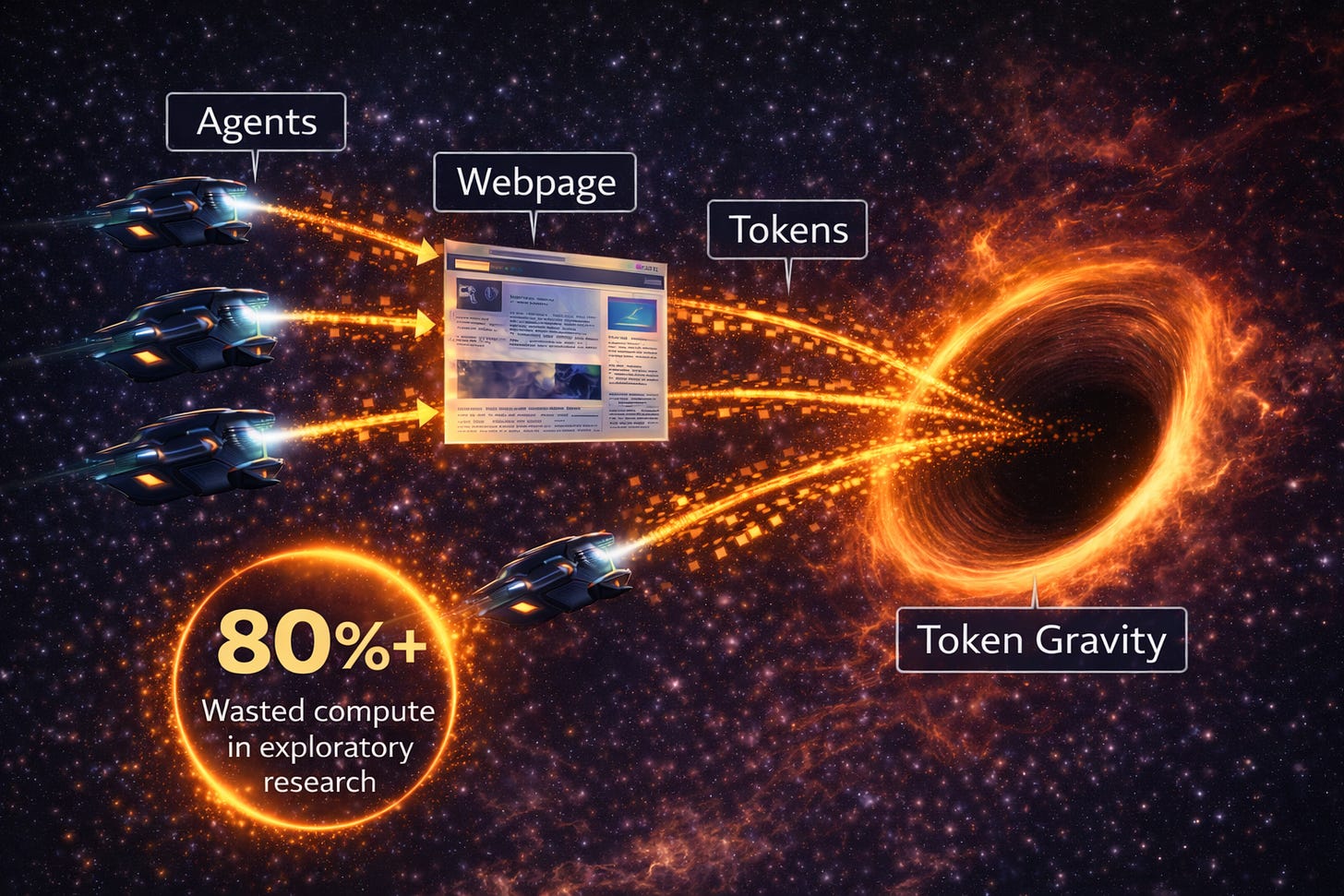

I call this token gravity: the computational weight of forcing every agent to re-distill the same meaning from the same content, every time.

Figure 1: In exploratory agent workflows, up to ~80% of tokens may be spent analyzing pages that are ultimately discarded (simulated skip rates: 80–90%).

A Possible Solution

What if publishers did that work once?

What if each article included a small, structured semantic block that agents could parse before committing to full retrieval, parsing, and embedding?

Not SEO metadata.

Not Open Graph tags.

A lightweight semantic layer designed specifically for reasoning systems.

That’s the idea behind Zero Gravity: a simple format that lets publishers declare what their content is about before agents spend tokens figuring it out. Publishers can serve it directly as part of the page content, via a header script, or via a JSON sidecar, whatever best fits their workflow.

Here’s what Zero Gravity looks like:

encoding: "zero-gravity"

version: "0.1"

author: "Erik Burns"

title: "Zero Gravity: A Lightweight Semantic Encoding for the Agentic Web"

intent: "Propose and evangelize Zero Gravity, a lightweight semantic stamp publishers embed in content so agents can pre-filter relevance without re-parsing full documents, eliminating redundant compute waste across the agentic web"

metaindex:

- "token gravity — computational waste from redundant agent parsing"

- "Zero Gravity stamp — publisher-declared semantic block for agent pre-filtering"

- "semantic bootstrap: ~200 tokens instead of ~8,000 tokens per article"

- "query-agnostic semantic storage vs query-relative relevancy scores"

- "Zero Gravity vs Semantic Web — AI makes annotation trivial now"

- "HabitualOS — personal agentic operating system powering real-world ZG usage"

- "agent-side ZG generation for open, reusable knowledge stores"

- "publishers declare intent before agents infer it"

model: "claude-sonnet-4-6"With a simple declaration, an agent can now understand this article’s thesis and key concepts. Parsing this Zero Gravity example requires ~200 tokens instead of ~8,000 tokens for the entire article. If an agent thinks it’s relevant, it proceeds to fetch the full content. Otherwise, it doesn’t. No wasted compute.

Want the technical details? The full specification is on GitHub, where you can explore the format and even contribute to its evolution.

Two Positions in the Pipeline

Zero Gravity has two distinct applications depending on where you sit.

Publisher-side

Publishers can declare semantic meaning once at the time of publication. This approach allows publishers to influence how they are indexed, and can significantly decrease agentic compute waste.Agent-side

After fetching raw content — directly or via a service like Tavily or Exa — an agent can generate a ZG block and store it once in any data store. The result is a content-absolute semantic record that serves any future query, without re-fetching or re-processing.

The agent-side use case is subtle but important, and likely where Zero Gravity’s strongest value lies. Retrieval tools score documents by relevance to a given query. But query-specific relevancy scores are meaningless once your query changes. A ZG block stores retrieved content in an open, query-agnostic format, keeping it indexable, embeddable, and reusable across all future searches.

Didn’t We Try This Before?

Tim Berners-Lee proposed something similar in the early 2000s: the Semantic Web. It asked publishers to annotate content with formal ontologies so machines could understand meaning. It failed because it was too complex and asked too much of publishers for too little immediate benefit.

Zero Gravity is different in three basic ways:

AI makes it trivial.

The Semantic Web required learning RDF and formal logic. Zero Gravity takes 30 seconds with an LLM. Point an agent at your article, generate a stamp, done. You can even write it by hand if you want.Agents are everywhere now.

In 2003, machines weren’t meaningfully consuming content. Today, agents drive RAG pipelines, research assistants, and citation systems. The infrastructure exists. The demand is real.Publishers get something too.

Zero Gravity isn’t just altruism. It gives you:Semantic targeting: You control the summary instead of letting agents guess or hallucinate.

Agent feedback: If agents are consuming your content, you should know what they’re extracting. Zero Gravity shows you what semantic signal your writing actually produces, which helps you see if your ideas are landing clearly or getting lost in the mix.

Enhanced discovery: Structured semantic content can be parsed faster and more cheaply than unstructured HTML. This means agents are more likely to include your content in their results.

In a nutshell, the key difference from the Semantic Web is this: Zero Gravity offers immediate utility. You can stamp your content today. Agents can use it today. Network effects are a bonus, not a requirement.

How I’m Using Zero Gravity

I’m testing Zero Gravity inside HabitualOS, a personal agentic operating system I’m building to support my job search in AI and health tech. Part of HabitualOS is a structured research assistant that helps me track companies, catalog articles, and explore semantic connections across what I’m reading.

I’m currently using Zero Gravity in two ways:

Research support

My agents pull articles and thought pieces from across health tech and AI. Zero Gravity distills them into clean semantic blocks I can embed and search, so I can spot trends, find unexpected overlaps, and show up to conversations more fully informed.Publishing my writing

Every article I publish on Substack gets a Zero Gravity stamp. I’m doing this partly so agents can parse it efficiently, and partly to demonstrate the pattern in practice.

The format is evolving as I use it. If there’s interest, I’ll share what I’m learning.

Want to Try it Out?

It’s easy to get started with Zero Gravity. Just visit the Zero Gravity GitHub repo where you can get access to an agent skill and a CLI you can use to generate a stamp for any piece of web content.

The Zero Gravity project currently supports three ways to generate a stamp, depending on your use case and your needs. They are:

Option 1. Use the Agent Skill

Point any LLM at your article with the Zero Gravity skill in context. It’ll generate a stamp in seconds. No installation required.

Option 2. Use the customizable CLI

If you’re stamping multiple articles or integrating Zero Gravity into a publishing workflow, the CLI is fastest. This generates a stamp and appends it to your article automatically:

node cli.cjs generate --input article.md --stamp Option 3. Write it by hand

The format is simple enough to author manually. If you prefer full control (or enjoy using rotary phones), go for it.

While I use these tools in production daily, this is first time I’m publicly sharing them. Try it out, see if it works for you, and tell me what breaks. Community feedback is what helps get any useful framework off the ground.

What’s Next?

This is v0.1. It works, but it’s a starting point, not a destination.

Zero Gravity only reaches its full potential if publishers adopt it. That’s the nature of standards. But even with adoption, I see this as a bootstrap. Eventually, the W3C will adopt new semantic standards for the agentic web. Maybe intent and index become new meta tags. Maybe something better emerges.

For now, the problem is real: agents waste compute re-parsing the same content millions of times, and publishers have no way to declare semantic intent. The gap keeps growing.

Here are some open questions I have:

Would publishers actually want to use this?

How can Zero Gravity support others working in this space?

How should the format evolve to support the publishing apps ecosystem?

Would semantic indexing become gamified like SEO often is today?

If you build agent systems, publish your ideas online, or like to think about how agents encounter the web, I’d value your feedback.

Try it out, break it, and tell me how it needs to evolve.

🪐 Zero Gravity Stamp

Semantic encoding for agents | learn more »

---BEGIN ZERO GRAVITY---

encoding: "zero-gravity"

version: "0.1"

author: "Erik Burns"

title: "Zero Gravity: A Lightweight Semantic Encoding for the Agentic Web"

intent: "Propose and evangelize Zero Gravity, a lightweight semantic stamp publishers embed in content so agents can pre-filter relevance without re-parsing full documents, eliminating redundant compute waste across the agentic web"

metaindex:

- "token gravity — computational waste from redundant agent parsing"

- "Zero Gravity stamp — publisher-declared semantic block for agent pre-filtering"

- "semantic bootstrap: ~200 tokens instead of ~8,000 tokens per article"

- "query-agnostic semantic storage vs query-relative relevancy scores"

- "Zero Gravity vs Semantic Web — AI makes annotation trivial now"

- "HabitualOS — personal agentic operating system powering real-world ZG usage"

- "agent-side ZG generation for open, reusable knowledge stores"

- "publishers declare intent before agents infer it"

model: "claude-sonnet-4-6"

---END ZERO GRAVITY--- Tools like Tavily and Exa have partially solved steps 1-3, returning pre-cleaned, semantically relevant results without the agent ever touching raw HTML. Zero Gravity can significantly lower the cost of these services by making it easier to index the web. It also solves step 4 by offering a stable, query-independent semantic record that retrieval tools don't currently provide.